If you manage or work on a software team, you have probably heard someone mention DORA metrics. They have become the standard way to measure how well a team delivers software. But what exactly are they, and why should your team track them?

What is DORA?

DORA stands for DevOps Research and Assessment. It started as a research programme led by Dr. Nicole Forsgren, Jez Humble, and Gene Kim. Over several years they surveyed tens of thousands of engineering professionals and identified four key metrics that reliably predict software delivery performance and organisational outcomes. Their findings are published in the annual Accelerate State of DevOps Report and the book Accelerate.

The DORA team is now part of Google Cloud, and the four metrics have become the de facto standard for engineering leadership to understand delivery health.

The four DORA metrics

DORA metrics are split into two pairs. Two measure throughput (how fast you ship) and two measure stability (how reliably you ship).

1. Deployment Frequency

How often your team successfully deploys to production. Elite teams deploy multiple times per day. Lower-performing teams may only deploy monthly or less. Higher frequency generally means smaller batch sizes, which reduces risk and accelerates feedback.

2. Lead Time for Changes

The time between a commit landing on your main branch and that code running in production. This captures everything from code review wait time to CI pipeline duration to deployment orchestration. Elite teams achieve lead times of under one hour.

3. Change Failure Rate

The percentage of deployments that cause a failure in production — a rollback, a hotfix, or a degraded service. This metric captures the quality of what you are shipping. Elite teams keep their change failure rate below 5%.

4. Mean Time to Recovery (MTTR)

When a deployment does cause a failure, how long does it take to restore service? This measures your team's ability to detect, diagnose, and fix problems. Elite teams recover in under one hour.

Why these four metrics matter together

It is tempting to focus on speed alone — deploy more often, cut lead times. But speed without stability is just moving fast and breaking things. The power of DORA metrics is that they balance both sides.

The DORA research consistently shows that elite teams are not trading off speed for stability. They are better at both. They deploy more frequently and have lower failure rates. This counterintuitive finding is one of the most important insights from the programme: speed and stability reinforce each other when you invest in the right practices.

DORA performance levels

The DORA research classifies teams into four performance levels based on their metrics:

| Metric | Elite | High | Medium | Low |

|---|---|---|---|---|

| Deployment Frequency | Multiple per day | Weekly to monthly | Monthly to biannually | Less than biannually |

| Lead Time for Changes | < 1 hour | 1 day to 1 week | 1 week to 1 month | > 6 months |

| Change Failure Rate | 0–5% | 5–10% | 10–15% | > 15% |

| Mean Time to Recovery | < 1 hour | < 1 day | 1 day to 1 week | > 6 months |

Source: DORA State of DevOps Report benchmarks. These thresholds can be customised in CI/CD Watch settings.

Who should track DORA metrics?

DORA metrics are useful at every level of an engineering organisation:

- Engineering managers use them to understand team delivery health, set improvement goals, and communicate progress to leadership. See our engineering managers use case.

- Tech leads use them to identify bottlenecks — is lead time high because of slow CI, long code reviews, or deployment queues?

- Platform and DevOps teams use them to measure the impact of infrastructure investments — did that caching change actually improve lead times?

- Developers use them as a shared language to discuss delivery performance without finger pointing.

Common mistakes when adopting DORA metrics

DORA metrics are powerful, but they can be misused. Here are the most common pitfalls:

Using metrics as individual performance targets

DORA metrics measure team and system performance, not individual productivity. Using them to evaluate individual developers creates perverse incentives — gaming deployments, avoiding risky changes, or rushing fixes instead of doing proper root cause analysis.

Tracking without acting

Dashboards are only useful if they drive decisions. When you see lead time creeping up, dig into why. Is your CI pipeline getting slower? Are PRs sitting in review? The metric is a signal — follow it to the root cause.

Optimising one metric at the expense of others

Deploying more frequently is not useful if your change failure rate spikes. The four metrics are designed to be tracked together precisely to prevent this kind of optimisation tunnel vision.

How to start tracking DORA metrics

The biggest barrier to DORA metric adoption is data collection. The metrics require correlating data across your version control system, CI pipelines, and deployment processes. Many teams try to build this themselves with scripts and dashboards, but maintaining that infrastructure becomes a project of its own.

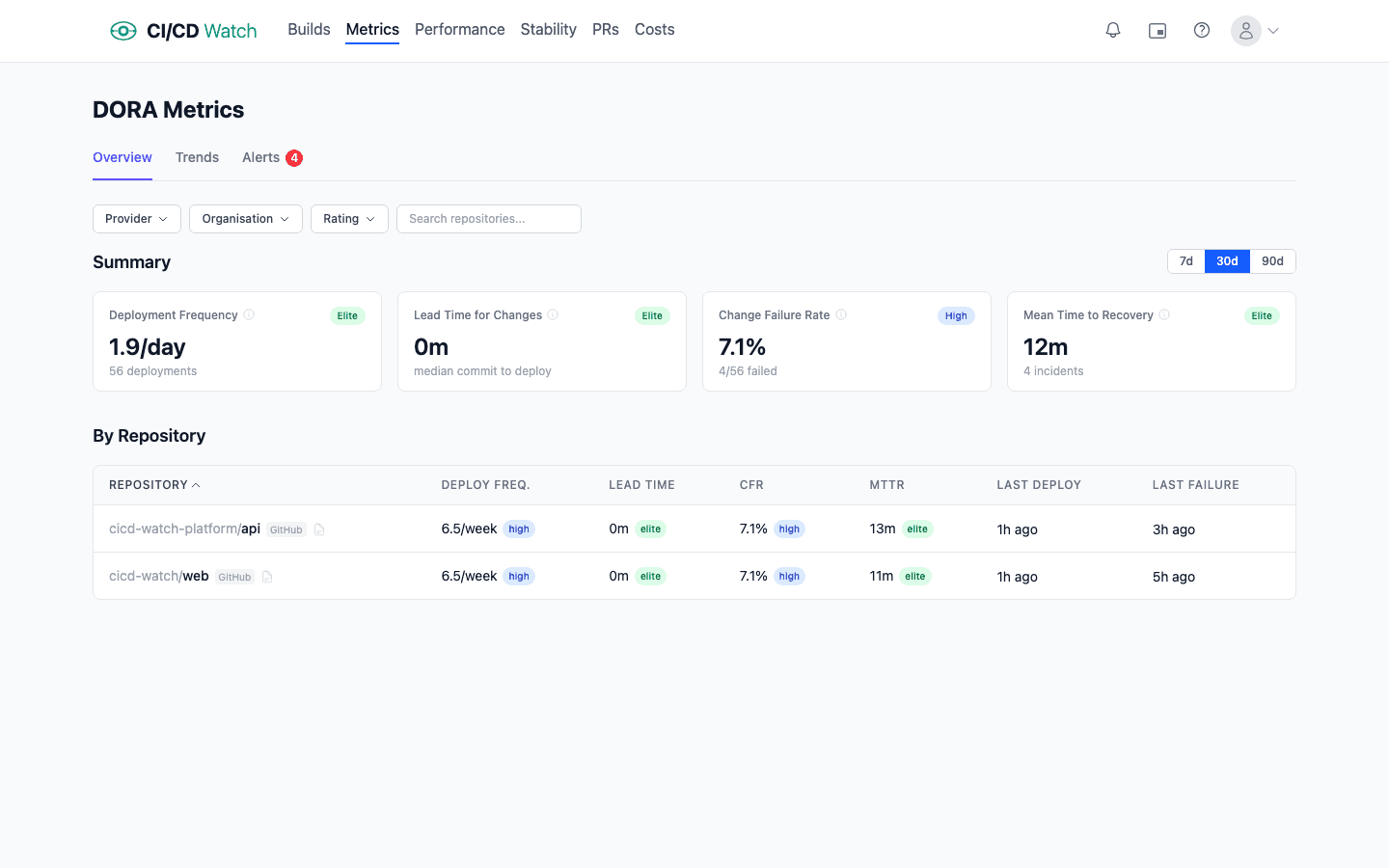

CI/CD Watch calculates all four DORA metrics automatically from your existing CI/CD pipeline data. Connect your provider — GitHub Actions, GitLab CI, Bitbucket Pipelines, CircleCI, Azure DevOps, or Jenkins — and your metrics appear within minutes. No manual tagging, no custom instrumentation.

You can read more about how we calculate each metric in our DORA metrics documentation.

Key takeaways

- DORA metrics are the industry standard for measuring software delivery performance

- Four metrics — deployment frequency, lead time, change failure rate, and MTTR — balance speed with stability

- Elite teams excel at both throughput and stability — they are not trade-offs

- Track metrics at the team level, not the individual level

- Automate data collection — manual tracking does not scale