A platform engineer opens the GitHub Actions bill for the quarter. $5,400 across twenty repositories. It is high enough that Finance has asked for a breakdown. The engineer writes one, flags the obvious offenders (a nightly build running hot on macOS, a matrix strategy fanning out to twelve jobs when six would do), and ships a memo.

What the memo does not say is that the $5,400 is the most visible layer of what CI/CD actually costs the business. The larger costs never appear on any invoice.

The true cost of CI/CD sits in three layers:

- Direct cost is what your provider bills plus what your team pays to wait: compute cost plus developer wait time, the two localised numbers that land on or near a specific pipeline run.

- Organisational cost is the drag that slow or flaky pipelines exert on your team's delivery feedback loop, which is the signal measured by DORA metrics.

- Business cost is what that drag adds up to in outcomes: features shipping later, defects reaching customers for longer, business objectives slipping because the team cannot learn and correct fast enough to hit them.

Most cost conversations stop at the first layer. Seen together, the three tell you whether your team's CI/CD practices are shortening the delivery feedback loop or quietly widening it: developer wait time dominates the direct cost by more than an order of magnitude, and the direct cost in turn feeds through to the organisational and business layers underneath.

The two direct costs: compute and wait time

The direct cost of CI/CD splits cleanly into two categories, and most teams account for exactly one of them.

Compute cost is what the provider bills you. Runner minutes, build minutes, deployment minutes, storage for artefacts and caches. It arrives as a monthly invoice with a line-item breakdown and lands in whichever accounting code Finance has set up for it. Because it has an invoice, it gets managed.

Developer wait time is what your team pays every time a pipeline is slow or needs to be rerun. An engineer pushes a commit, then waits: sometimes they context-switch, sometimes they stare at the runs page, sometimes they leave for a coffee and come back to find the run failed on a flaky test at minute eighteen. That waiting is paid out of salaries, not a provider invoice, and because it has no invoice it usually goes unmanaged.

Both are real money. The order of magnitude between them is the thing teams tend to get wrong.

One smaller third line sits alongside: platform maintenance, the cost of keeping CI/CD itself running. It can dominate where teams run their own CI/CD infrastructure (Jenkins being the canonical case) and is usually absorbed into the platform team elsewhere.

Compute cost: what is actually billed

Every CI/CD provider bills differently, but the underlying model is similar: per-minute metering against a tier of included minutes, usually with a multiplier for runner type or operating system.

GitHub Actions is the canonical example. Public repositories are free. Private repositories get included minutes tied to plan (2,000 per month on Team, 50,000 on Enterprise) and then overage at $0.008 per Linux minute. Windows multiplies the rate by 2; macOS by 10. Larger runners bill at a higher per-minute rate. Self-hosted runners are free to GitHub, but you carry the infrastructure cost yourself.

GitLab CI, Bitbucket Pipelines, and CircleCI each run a variation on the same theme: included minutes, overage billing, OS or runner-size multipliers (GitLab applies a cost factor per runner type; CircleCI meters credits per minute per resource class; Bitbucket multiplies steps by a runner-size factor up to 32x).

Azure DevOps is a different shape: it sells parallel job slots at a flat monthly rate per concurrent pipeline rather than billing per minute, so cost scales with how many jobs you need to run at once, not how long they take.

Jenkins is different again because it is self-administered by default: compute cost is whatever your infrastructure team spends on the EC2 or on-prem hardware the agents run on, plus the time of the people who keep Jenkins itself running.

| Provider | Meter | Multipliers | Infra responsibility |

|---|---|---|---|

| GitHub Actions | Per minute | Windows 2x, macOS 10x, larger runners tiered | Provider hosts (self-hosted optional) |

| GitLab CI | Per compute minute | Cost factor per runner type and project type | Provider hosts (self-hosted optional) |

| Bitbucket Pipelines | Per minute | Runner size multiplier (1x / 2x / 4x / 8x / 16x / 32x) | Provider hosts (self-hosted optional) |

| CircleCI | Per minute (credits) | Resource class tiers the credit cost | Provider hosts (self-hosted optional) |

| Azure DevOps | Per parallel job | Microsoft-hosted vs self-hosted pricing tiers | Provider hosts (self-hosted optional) |

| Jenkins | Infra cost + operator time | Whatever your hardware charges | Always self-hosted |

The shared theme across the per-minute providers is metering with a multiplier that punishes the wrong OS or oversized runner choice. The shared trap: cost increases that look like usage growth are often a misconfigured multiplier on a workload that did not need it. Azure DevOps hides the equivalent trap behind a different shape of bill: over-provisioned parallel slots rather than over-multiplied minutes.

Storage is the easy-to-miss line item inside compute. Artefact retention, log retention, test-result archives, and container registries accumulate every day and are rarely pruned. Teams that have never reviewed retention policies routinely find storage eating a non-trivial share of the monthly bill, and most providers charge for it on top of compute minutes.

The pricing models are configurable enough that hardcoding any one provider's rates as universal is a mistake. For concrete numbers across the six providers, see the breakdown of what GitHub Actions actually costs and the typical ranges for cost per build. The headline is that compute cost scales with pipeline minutes, matrix width, and runner size, and every one of those is under your team's control.

Compute spend per developer varies widely with runner choice and matrix width. On a customer workload running on GitHub Actions we see roughly $16 per developer per month for a team with modest workloads. Teams on macOS runners or heavy matrix builds routinely run multiples of that.

Developer wait time: the cost category everyone ignores

Here is the worked example that reframes the conversation. A platform team runs a 20-minute pre-merge pipeline. The team has 100 engineers. The fully-loaded cost of an engineer (salary plus benefits plus overhead) is $75 per hour, which is conservative for most markets. Each engineer pushes, on average, three commits per day that invoke the full pipeline.

20 minutes × 100 engineers × 3 commits per day × $75 per hour works out to $7,500 of wait time every working day. Across a 250-day work year that is roughly $1.9 million. The same workload on a GitHub-hosted Linux runner at $0.008 per minute costs about $12,000 per year in compute. The wait-time number is roughly two orders of magnitude larger than the compute number.

The answer to “but developers context-switch while waiting, they do not really lose the full time” is that context-switching has its own well-documented cost: returning to deep work after an interruption takes roughly twenty minutes. Even if you cut the wait figure in half to account for partial productivity during waits, you are still more than an order of magnitude above the compute bill.

Wait time also hides in places that are not the pipeline itself. A pull request that sits for two days waiting for CI to finish is an unusual pathology. A pull request that sits for two days waiting for a human reviewer to merge it, because every earlier attempt hit a flake and nobody wants to kick the pipeline a fifth time, is common. Sequential pipeline stages that could run in parallel add wait time without adding compute. Runner queues during peak hours add wait time without adding compute. Deployment gates that require a manual approval add wait time without adding compute. Each of these costs the same hourly rate per engineer blocked, and none of them shows up on any provider invoice.

Developer wait time is the dominant component of CI/CD cost for almost every team larger than a few people. It is also the one component Finance never sees, because nobody sends an invoice for it.

Wait time also feeds DORA metrics. Long pipelines lengthen change lead time, one of the four original DORA metrics: if you already measure lead time, you already measure a proxy for pipeline wait.

Waste categories: where the budget disappears

Once you accept the compute-plus-wait taxonomy, every waste category applies to both sides of the ledger. Each wasted minute is compute the provider billed and wait a developer paid. Here are the categories we see most often across customer workloads.

Reruns from flakiness

A flaky test fails, the pipeline reruns, the test passes, the team moves on. The cost is that the entire pipeline ran twice: compute doubled, wait time doubled. On a customer workload in a recent 30-day window we observed a 5.8% rerun rate, roughly one commit in seventeen needing to be kicked again. Unchecked flakiness pushes that well into double digits, and every percentage point of rerun rate compounds with pipeline duration.

Reruns are usually a symptom of weak test hygiene rather than a technical problem to filter out. Quarantining the top-offender tests is cheaper than accepting a permanent rerun tax.

Oversized runners

Defaults are sticky. Teams pick a runner size early, never revisit it, and run the same workload on a 4-vCPU runner that would complete in roughly the same time on a 2-vCPU runner for half the cost. The inverse is also true: some workloads genuinely benefit from a larger runner, and paying for a faster pipeline buys back wait time across the whole team. Rightsizing is a measurement exercise, not a default choice.

Dead pipelines

Workflows that run on every push but nobody reads the results of. Nightly builds on branches nobody has merged in six months. Security scans that fail silently and alert nobody. Each is compute spent on a signal nobody acts on. On a customer workload we observed roughly a third of compute minutes on scheduled runs alone, which is the upper bound on what a quarterly audit might strip out: not every scheduled run is dead, but dead runs are usually scheduled.

Red-main blocks

Main is red. The team cannot merge. Every engineer waiting on a merge is blocked; every engineer whose work depends on the broken feature is blocked on them. A single hour of red main on a 100-engineer team at $75 per hour is $7,500 of pure wait cost. The compute bill does not move; the wait bill is large.

Parallelism that does not parallelise

A matrix that fans out to eight jobs but one job takes fifteen minutes and the other seven take two minutes each gives you a fifteen-minute pipeline at 8x the compute. Real parallelism requires balancing work across jobs: sharding tests, splitting matrix dimensions that actually vary, or doing shared work once and fanning out only the part that benefits from concurrency.

The detailed taxonomy across all of these, with detection methods and fixes, is worth a post on its own.

When wait time beats compute (usually) and when it does not (rarely)

The back-of-envelope crossover is straightforward. Wait time dominates compute when:

- Team size is non-trivial (roughly ten or more engineers)

- Pipelines gate merges (pre-merge CI, not just post-merge)

- Pipelines take more than a few minutes

That is most teams. Compute wins when the team is very small, the pipeline is already fast, and compute is expensive: a solo developer running heavy macOS builds on a GitHub-hosted Apple silicon runner can genuinely see the invoice exceed their own wait-time cost, because one engineer cannot wait more than one engineer's hourly rate.

For everything in between, the back-of-envelope math plays out in live data. On a customer workload in a recent 30-day window we measured a wait-to-compute ratio of 111×, just short of two orders of magnitude.

The wider cost: organisational efficiency and business outcomes

Compute and wait time are the localised costs: numbers you can attribute to a specific workflow run and a specific engineer's calendar. Both end at the edge of the pipeline. The wider cost starts where the pipeline hands off to the rest of your delivery system, and it is where CI/CD moves from a Finance line item to a business concern.

Organisational efficiency: the delivery feedback loop

Every software team runs on feedback loops. Commit to CI, CI to review, review to merge, merge to deploy, deploy to production signal, signal to customer response. The duration of each loop determines how quickly the team learns whether the last decision was right, and how quickly it can adjust the next one. A slow or flaky pipeline widens the loop closest to the commit, and every loop downstream inherits the delay.

This is the signal that DORA metrics measure. Change lead time, deployment frequency, change failure rate, failed deployment recovery time, and deployment rework rate are each a reading on how healthy a specific loop is. Elite-tier DORA teams move change-ready work to production in under a day; low-tier teams take weeks. The pipeline is one of several contributors to the numbers, but it is the one visible at every commit, which makes it the lever most teams can actually move.

Business outcomes: why the loop matters

A slower feedback loop means slower learning. Features ship later. Defects reach customers for longer. Experiments take more calendar weeks to produce a verdict. Teams course-correct on bets that did not land after the competitor has already shipped the next bet. The business objective that depended on all of this (a revenue target, a customer-retention goal, a time-to-market commitment) slips proportionally, because the organisation could not complete the commit-to-customer loop quickly enough to meet it.

The DORA research is the most rigorous body of evidence for this link. High performers on the delivery metrics are also high performers on commercial outcomes, controlling for industry and size. The mechanism is the feedback loop: teams with tighter loops learn faster, ship more decisions per unit time, and recover from mistakes before they compound.

This is why CI/CD cost, taken seriously, is not a Finance line item. The compute bill and the wait-time bill matter, but the figure that ultimately moves the needle is whether the delivery loop is fast and reliable enough to let the business make and verify decisions at the rate it actually needs to.

Reducing each: practices first, then tooling

The most expensive mistake teams make with CI/CD cost is treating it as a tooling problem. It is a practices problem first.

Trunk-based development with small, frequent merges keeps any given pipeline close to the commit that caused the change, which shortens debug time and shrinks the batch of work each run is validating. Fast, reliable tests remove the rerun tax. A branching model that treats main as always-shippable removes the red-main-blocks category of wait cost. These are free: they cost discipline, not licence fees.

Tooling comes second. Once the practices are sound, tooling can compound the gains: selective test execution based on changed files, caching layers, rightsized runners, smart matrix strategies. The practical playbook for the tooling side is worth its own deep dive; the taxonomy above tells you which levers are there to pull.

The common thread is that every reduction on the wait-time side usually reduces compute too. A pipeline you make faster costs less in both categories. A flake you fix costs less in both. A dead pipeline you retire costs less in both. The numbers compound in the same direction.

Where to look first

If you are auditing cost for the first time, four numbers explain most of what you will find:

- p95 pipeline duration over the last 30 days. Wait time scales with p95 more than with median: the slow runs are what block the team. Trend it week over week.

- Rerun rate per workflow. A workflow that reruns often is paying double on both sides of the ledger and its reruns almost always trace back to a small number of flaky tests.

- Compute spend per developer per month. Normalising by team size strips out “the team grew” and exposes whether each developer's cost has actually changed.

- Ratio of pipeline minutes on OS or runner-size multipliers. If a meaningful slice of your minutes runs on macOS or a 2x runner that does not need the extra capacity, the multiplier alone is a noticeable line item.

None of these numbers requires an expensive tool. They can be pulled from each provider's API or billing export with a modest amount of scripting. The harder problem is keeping them current, and keeping them comparable across providers when a team runs more than one.

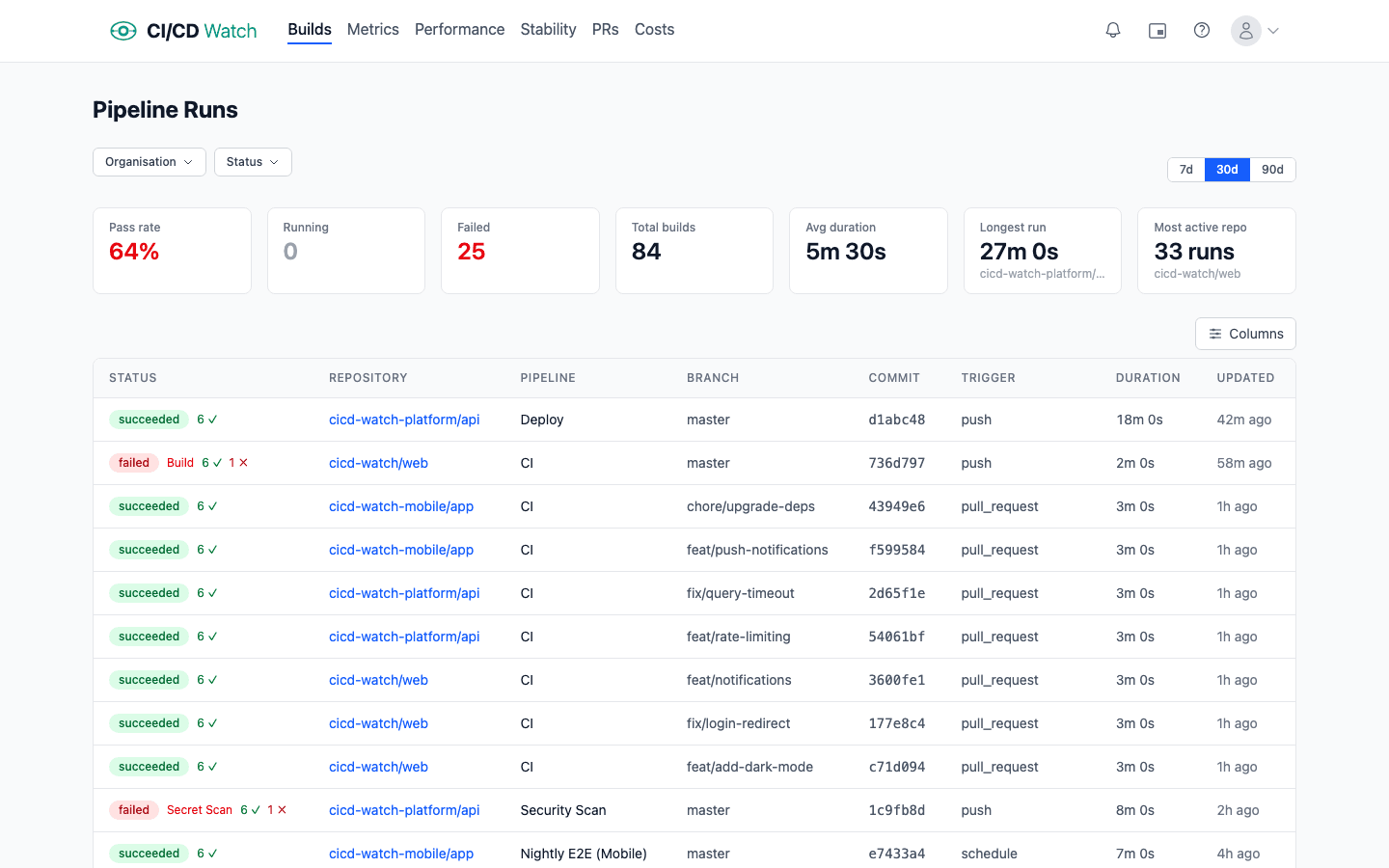

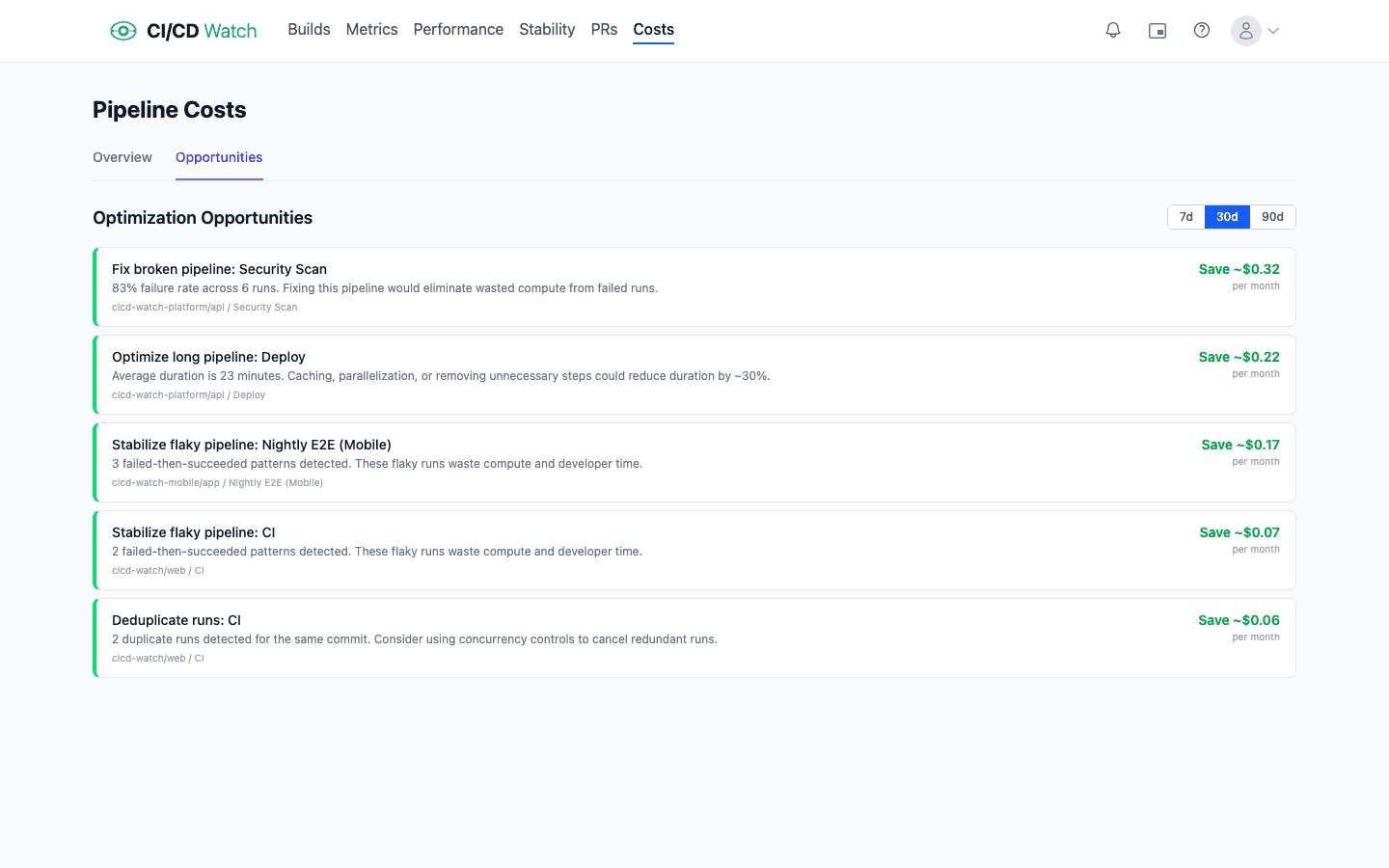

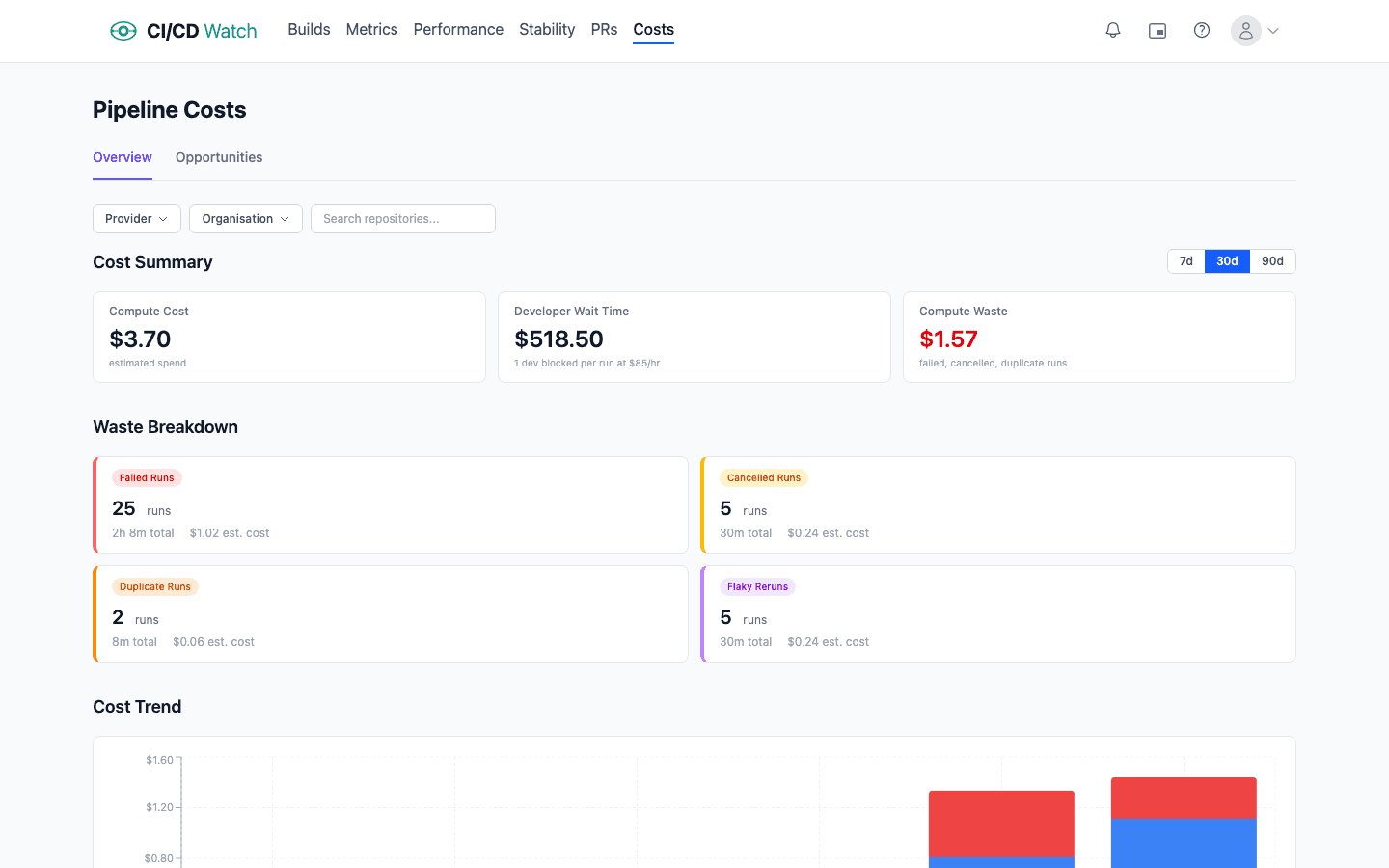

How CI/CD Watch surfaces cost

CI/CD Watch, a CI/CD observability platform that monitors pipelines across GitHub Actions, GitLab CI, Bitbucket Pipelines, CircleCI, Azure DevOps, and Jenkins, was built to make the compute-plus-wait model visible. The cost view starts from both components side by side, not the invoice in isolation.

Compute is computed from provider-reported billable minutes and the rate card for each runner type. Wait time is computed from pipeline duration and your team's configured hourly rate, applied to the developer attached to the run. Both numbers roll up the same way: per workflow, per repository, per provider, across whatever time window you ask for. How the numbers are derived, including the configurable hourly rate and per-runner compute rates, is documented in the cost-calculations reference.

The waste categories have their own views: reruns driven by flaky tests, oversized runners relative to actual utilisation, dead pipelines (workflows with no human audience), red-main blocks, and parallelism gaps. Each is expressed as both a compute number and a wait-time number, so the argument for fixing any individual one is legible to both Finance and Engineering.

On one real customer workload (13 repositories, 345 terminal runs in a 30-day window) we measured $16 in compute against $1,750 in developer wait time, a ratio of roughly 111 to 1. Annualised, that is $200 of runner minutes against $21,000 of developer wait time on a workload the team considers healthy. The invoice is the smaller number by two orders of magnitude, and cost conversations that start with it miss most of the money.

See the true cost of CI/CD

CI/CD Watch's Free tier covers pipeline monitoring for small teams. Connect a provider and see every workflow run across every repository in one dashboard, part of the broader CI/CD monitoring picture.

CI/CD Watch is built by 3CS Technologies Ltd. It started as an internal tool for tracking pipeline health across a mixed GitHub Actions and Jenkins estate. The same engine now powers the SaaS platform.